d/acc

Chapter 6, part 1 of Technological Metamodernism

Previously:

Reader: Alright, we’ve covered the proto-metamodern thinkers—Illich, Schumacher, Bookchin, Weiser/Brown. But those frameworks were developed decades ago. What about contemporary approaches? Surely there are people trying to apply this type of thinking to our current technological moment?

Hilma: Indeed. And one approach that’s gained traction recently is “d/acc.” The term was coined by Vitalik Buterin in his late 2023 essay “My techno-optimism“, with the “d” referring to both “differential/defensive” and “decentralized/democratic.”

Reader: So it’s yet another variant of accelerationism? I thought we established that e/acc was problematic.

Hilma: d/acc emerged explicitly as a response to e/acc—a way of saying “yes to acceleration, but not acceleration of everything.” It’s an attempt to be selective, intentional, and directional about which technologies we accelerate.

The basic insight is deceptively simple: not all technologies are created equal. Some make the world more dangerous; others make it safer. Some concentrate power; others distribute it. Some enhance human agency; others diminish it. If we’re going to continue technological development—and realistically, we are—then we should be smart about which technologies we prioritize.

Let’s take biosecurity as an example. On the offensive side, you have technologies that make it easier to create dangerous pathogens—DNA synthesis becoming cheaper and more accessible, gain-of-function research that makes viruses more transmissible, tools that lower the barrier to bioengineering. On the defensive side, you have technologies that make us more resilient to pandemics—rapid vaccine platforms, wastewater monitoring, air filtration, broad-spectrum antivirals, early detection systems.

A d/acc approach says: slow down or restrict the offensive technologies, accelerate the defensive ones. Don’t just accept the default trajectory where both advance at whatever rate market forces dictate. Be intentional. Use funding, regulation, social pressure, and cultural norms to shape which technologies develop faster.

Reader: I like the sound of that. But surely Vitalik wasn’t the first person to be thinking along these lines?

Hilma: That’s true. d/acc builds directly on an earlier concept called “differential technological development,” or DTD.

DTD was first articulated by philosopher Nick Bostrom in a 2002 paper on existential risks. The core principle was simple: “trying to retard the implementation of dangerous technologies and accelerate implementation of beneficial technologies, especially those that ameliorate the hazards posed by other technologies.”

Bostrom was writing in the context of thinking about existential risks—threats that could permanently curtail humanity’s potential or even cause extinction. He was particularly concerned about nanotechnology and artificial superintelligence. His argument was that even if we can’t stop powerful technologies from being developed eventually, we can influence when they arrive and in what sequence.

Reader: Sequence matters?

Hilma: Enormously. Consider a counterfactual: what if we had developed nuclear weapons before developing nuclear second-strike capabilities like submarine-based missiles? The early Cold War would have been vastly more unstable, with much higher risk of nuclear war. Both sides would have faced intense pressure to strike first before being struck. Developing second-strike capability—making it impossible to eliminate an enemy’s ability to retaliate—stabilized the situation. Not perfectly, but significantly.

Or imagine if we develop advanced nanotechnology—molecular assemblers that can build almost anything—before developing defensive technologies like immune-system-like security for physical matter. There’d be a window of vulnerability where destructive actors could create self-replicating nanobots before anyone has defenses. The order matters.

Reader: So DTD is basically “build shields before swords”?

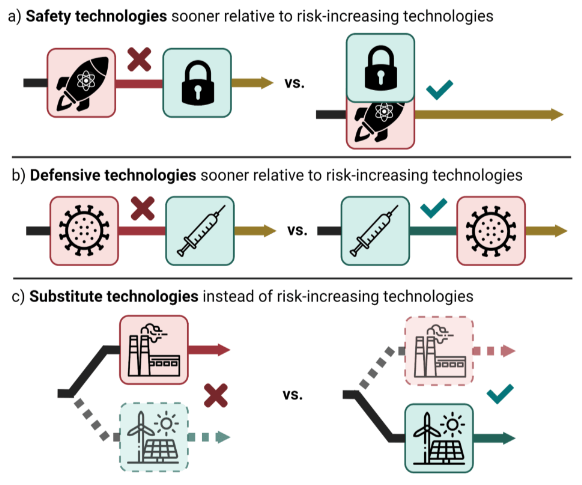

Hilma: That’s the intuition, yes. Though the reality is more textured. Sandbrink and colleagues at Oxford have developed a more sophisticated framework that identifies three types of risk-reducing technologies:

Safety technologies modify risk-increasing technologies to make them less dangerous. Electronic locks on nuclear weapons—permissive action links—are a perfect example. They don’t eliminate nukes, but they prevent unauthorized or accidental launches. DNA synthesis screening is another—it doesn’t prevent DNA synthesis, but it makes it harder to synthesize dangerous pathogens.

Defensive technologies provide protection without modifying the dangerous technology itself. Vaccines are the paradigmatic case—they don’t make viruses less dangerous, but they make us immune. Cybersecurity tools that detect and block malware are another example.

Substitute technologies achieve the same benefits as a risky technology but with fewer dangers. Renewable energy substitutes for fossil fuels. Non-viral gene therapy delivery methods could substitute for viral vectors that might be weaponized.

The crucial insight: the timing of these relative to risk-increasing technologies matters profoundly. Developing vaccines before someone creates an engineered pandemic prevents devastation. Developing them after means we’re scrambling to respond to catastrophe.

Reader: This makes sense in principle, but how realistic is it? Can we actually predict which technologies will be dangerous and which will be protective before they’re developed?

Hilma: That’s the hard question, and DTD proponents don’t claim perfect foresight. But they argue—convincingly, I think—that we’re not completely blind either. Some predictions are relatively straightforward.

When you see research aimed at making viruses more transmissible in mammals, you can anticipate that it creates pandemic risk. When you see proposals for cheap, accessible DNA synthesis machines without screening mechanisms, you can foresee bioweapon proliferation concerns. When you see AI systems optimized for engagement through emotional manipulation, you can predict attention capture and polarization.

However, as Michael Nielsen points out, it’s often genuinely difficult to determine whether a technology increases or decreases risk, what he calls the “risk sign problem”.

Take nuclear power. It reduces carbon emissions (good for climate), but creates radioactive waste and proliferation risks (bad for security). Is it DTD to accelerate nuclear development? The answer depends on how you weigh climate risk against nuclear risk, and reasonable people disagree.

This ambiguity is a real problem. DTD is most useful when it’s clearest—vaccines are defensive, bioweapons are offensive. But many technologies fall into grey areas where the classification depends on complex empirical questions and value judgments. Nielsen suggests we need better frameworks for making these assessments, possibly including prediction markets and systematic risk analysis.

But here’s what I find most interesting about DTD: it fundamentally challenges the idea that we should just let markets and “natural” technological development determine what gets built. It says we need intentional direction. That’s a profoundly political claim dressed up in seemingly neutral language about risk.

Reader: How so?

Hilma: Because asserting that we should differentially develop technology presupposes that “we”—some collective—should have coordinated agency over technological development. It rejects market fundamentalism (let individual actors pursue profit and everything will work out) and pure libertarianism (any intervention in technological development is illegitimate).

DTD says: there are collective interests in steering technology that individual incentives won’t automatically serve. We need to coordinate to slow some things down and speed other things up, even when market forces point differently.

Vitalik and the DTD crowd are aligned on this point. In his first essay on d/acc, he writes:

Often, it really is the case that version N of our civilization’s technology causes a problem, and version N+1 fixes it. However, this does not happen automatically, and requires intentional human effort. The ozone layer is recovering because, through international agreements like the Montreal Protocol, we made it recover. Air pollution is improving because we made it improve. And similarly, solar panels have not gotten massively better because it was a preordained part of the energy tech tree; solar panels have gotten massively better because decades of awareness of the importance of solving climate change have motivated both engineers to work on the problem, and companies and governments to fund their research. It is intentional action, coordinated through public discourse and culture shaping the perspectives of governments, scientists, philanthropists and businesses, and not an inexorable “techno-capital machine”, that had solved these problems.

Reader: Okay, I follow the DTD framework. You said d/acc adds a “decentralized/democratic” dimension to it. What’s that about?

Hilma: DTD asks: “Which technologies should we develop faster?” d/acc adds: “And how should those technologies be structured?” The “differential/defensive” part is DTD. The “decentralized/democratic” part is Vitalik’s innovation.

Here’s why this matters: you could pursue DTD in a completely centralized, authoritarian way. Imagine a world government with perfect surveillance that detects and prevents anyone from developing dangerous technologies while directing massive resources to defensive ones. That’s DTD! But it’s also dystopian—the cure might be worse than the disease.

This is exactly the dilemma Bostrom explores in his Vulnerable World Hypothesis. He considers scenarios where catastrophically dangerous technologies—”black balls” as he calls them—become accessible to individuals or small groups. His conclusion is sobering: preventing civilizational devastation from such technologies might require “extremely effective preventive policing” and “effective global governance.” In some scenarios, he suggests, we might need ubiquitous real-time surveillance to prevent individuals from accessing dangerous capabilities.

Reader: That’s terrifying. So the price of survival is total surveillance?

Hilma: That’s one possible path, yes. But d/acc says: what if there’s another way? What if instead of maximizing centralized control, we maximize decentralized defense? What if we build technologies that make it easier to protect than to attack, easier to detect threats than to hide them, easier to coordinate responses than to cause harm?

This reframes the entire challenge. Instead of asking “how do we give someone enough power to stop all dangerous actors,” we ask “how do we make defensive capabilities so robust and distributed that dangerous actors can’t succeed?”

The classic example is encryption. You could try to prevent bad actors from hiding their communications through centralized surveillance—monitor everything, backdoor all devices, give governments keys to all encrypted channels. That’s the authoritarian DTD approach. Or you could make everyone’s communications more secure through strong encryption, making mass surveillance impossible but also making it harder for anyone—including governments—to violate privacy. That’s the d/acc approach.

Reader: But doesn’t that also protect criminals and terrorists?

Hilma: Yes, and that’s the tension. The d/acc bet is that widely distributed defensive capabilities create more net benefit than centralized offensive capabilities, even accounting for the fact that some bad actors will exploit the same protections.

The crucial claim—and it is speculative—is that defense-favoring technologies enable healthier governance overall. Vitalik uses the metaphor of Switzerland, which has maintained relative freedom and decentralized governance partly because of defense-favoring geography—literal mountains that make invasion hard but don’t prevent trade and voluntary cooperation.

d/acc asks: can we create a similar dynamic through technology? What are the “digital mountains” that could do for online life what the Alps do for Swiss independence? That’s what encryption, decentralized networks, and privacy-preserving computation potentially offer.

Reader: It’s been two years since Vitalik’s original essay. How has the idea progressed over that period?

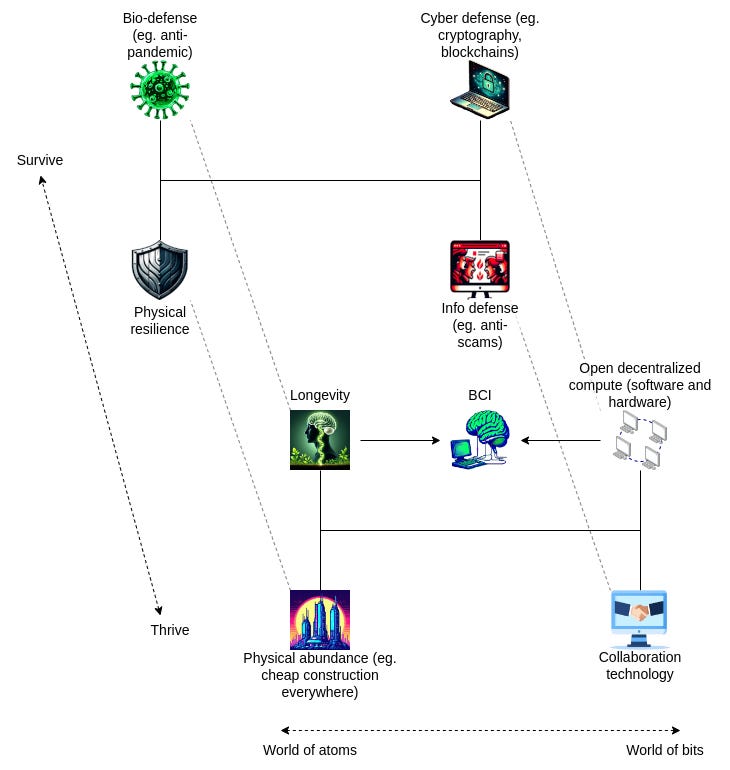

Hilma: In early 2025, Vitalik published a follow-up called “d/acc: one year later” that significantly refined and expanded the framework. The most important addition is what he calls the survive/thrive dimension—recognizing that defensive technologies aren’t just about preventing catastrophes but also about enabling human flourishing.

He introduces an eight-part framework organized along two axes. First axis: atoms versus bits—physical-world technologies versus information-world technologies. Second axis: survive versus thrive—technologies that protect us from harm versus technologies that help us reach our potential.

Reader: Can you walk me through these eight categories?

Hilma: Let’s start in the world of atoms—the physical realm. Here we have bio/survive and bio/thrive, and physical/survive and physical/thrive.

Bio/survive is about protecting ourselves from biological threats. This includes everything we’ve discussed around pandemic prevention: vaccine platforms, wastewater monitoring, air filtration, pathogen detection. But it extends further—defenses against natural aging and disease, protection from environmental toxins, resilience to climate impacts on health. The goal is robust biological security at both individual and collective levels.

Bio/thrive is where defensive biology becomes enhancement. This includes longevity research aimed at extending healthy lifespan, therapies that reverse age-related decline, treatments for chronic conditions that don’t just manage symptoms but restore function. It also encompasses mental health innovations, cognitive enhancement that doesn’t create dependency, and eventually perhaps technologies like brain-computer interfaces that augment human capabilities. The key is that these serve human flourishing rather than merely preventing death.

Physical/survive addresses non-biological physical risks—resilient infrastructure that continues functioning when centralized systems fail, decentralized energy that keeps working during grid collapses, local food production insulated from supply chain disruptions. Vitalik mentions self-sufficient space colonies as an extreme example of physical/survive—if Earth becomes uninhabitable, humanity survives elsewhere. But more mundanely, it includes things like earthquake-resistant construction, storm-hardened telecommunications, backup power systems.

Physical/thrive is about using physical technology to enhance quality of life—better transportation that’s more accessible and sustainable, housing that’s healthier and more beautiful, physical abundance that nobody lacks basic material needs. It includes space exploration not merely as backup but as expanding human experience, manufacturing technologies that create prosperity without planetary destruction.

Reader: Okay, that covers the world of atoms. What about bits?

Hilma: Right, information realm has its own survive/thrive dimensions.

Cyber/survive is about defending digital infrastructure—protecting against hacking, preventing system failures, maintaining security of communications and transactions. This includes the cryptography we mentioned earlier, blockchain security, formal verification of code, sandboxing and isolated computing environments. It’s making the digital realm safe for human activity.

Cyber/thrive extends this to positive capabilities—censorship-resistant communication, privacy-preserving computation, decentralized coordination tools. It’s not just preventing attacks but enabling digital freedom. Zero-knowledge proofs that let you prove things about yourself without revealing private information. Decentralized identity that you control rather than platforms managing. Tools for collective decision-making that don’t require trusted intermediaries.

Info/survive deals with epistemic threats—misinformation, manipulation, coordinated deception campaigns. This is where Community Notes fits—helping people identify truth and falsehood in adversarial environments. Prediction markets serve this function too—they aggregate information in ways that are hard to manipulate. The goal is maintaining shared reality even when powerful actors try to distort it.

Info/thrive is about enhancing collective intelligence and wisdom. This includes Pol.is and similar tools for finding common ground across disagreement, mechanisms for high-quality deliberation at scale, ways of surfacing expertise without creating information monopolies. It’s not just defending against bad information but actively improving how we think together.

Reader: I notice you haven’t mentioned AI much in this framework. Where does artificial intelligence fit?

Hilma: That’s deliberate and important. d/acc doesn’t center AI the way both e/acc and the doomers do. For e/acc, AI is the supreme technology to accelerate. For doomers, it’s the supreme risk to prevent. Both make superintelligent AI the hinge of history.

d/acc takes a different stance: AI is one technology among many, and how it impacts humanity depends entirely on how it’s built and deployed.

AI as a tool that augments human capability while remaining under human control, so-called ‘Tool AI’—that’s d/acc. AI as autonomous agents pursuing their own goals—that’s potentially dangerous and should be approached cautiously.

AI concentrated in a few corporations or governments—that’s centralizing and concerning. AI that’s open-source and widely accessible—that’s decentralizing and potentially empowering.

The framework shifts attention from “how fast should we build AGI” to “what kinds of AI systems should we build, and how should they be governed?” It’s not about speed but about direction and structure.

Reader: You’ve been building toward calling d/acc “metamodern”. It’s time to make your case. What specifically makes this framework metamodern rather than just sensible risk management?

Hilma: Let me walk through the four key aspects of metamodern sensibility we identified in Chapter 4 and show how d/acc embodies each.

First: a belief in development and progress. d/acc is fundamentally optimistic about humanity’s ability to solve problems through intentional action. It doesn’t retreat into degrowth fatalism or pretend we can return to some pre-technological Eden. Instead, it says: yes, we can develop better vaccines, better air filtration, better coordination tools—and these developments genuinely make life better. But this optimism has passed through the fire of critique. d/acc acknowledges that progress creates new problems, that every solution has costs, that the future will require ongoing adaptation rather than arrival at some fixed destination. It’s optimism that knows its own limitations.

Second: aiming at reconstruction. d/acc doesn’t stop at critiquing surveillance capitalism or lamenting the attention economy—it actively builds alternatives. Quadratic funding mechanisms, retroactive public goods funding, open-source biosecurity infrastructure, decentralized identity systems: these are concrete reconstructions, not just theoretical alternatives. The framework inherits postmodernism’s insights about power and incentives, then uses those very insights to design systems that work differently. It understands that it’s not enough to deconstruct the attention economy; we must construct attention-respecting alternatives. Not enough to critique centralised platforms; we must build decentralised ones that actually function.

Third: both-and thinking. d/acc holds simultaneously that technology is profoundly beneficial AND that many technologies are dangerous. It believes in acceleration AND in caution. It’s optimistic about human potential AND realistic about human nature and coordination problems. It wants robust defense AND individual freedom—rejecting the false choice between security through surveillance and vulnerability through liberty. The survive/thrive framework embodies this synthesis too: we can work on technologies that protect us from catastrophe AND that help us flourish. A world that merely survives, huddled behind defenses, isn’t worth building; a world that pursues flourishing while ignoring existential threats won’t last long enough to enjoy it.

Fourth: its Game Change orientation. d/acc explicitly aims to change the rules of technological development, not just play better within existing rules. Game Acceptance would be: “Technological development follows its own logic—market forces, competitive dynamics, the techno-capital machine. Trying to steer it is futile; the best we can do is trust that market competition will eventually produce good outcomes.” Game Denial would be: “If we just convince enough people that certain technologies are dangerous, they’ll choose not to build them—we can solve this through moral appeals and raising awareness without needing to change underlying incentive structures.” Game Change is: “Let’s understand exactly why market forces and competitive dynamics push toward offensive, centralizing technologies, then deliberately construct alternative funding mechanisms, technical architectures, and cultural norms that make defensive, decentralizing technologies the path of least resistance.”

Reader: Okay, I can see these connections to metamodernism. But honestly, it all sounds rather... convenient? Like d/acc gets to claim all the good parts of both acceleration and caution while avoiding commitment to either? Let’s hear the critiques.

Hilma: I’ll start with the most fundamental: the classification problem that we touched on earlier with DTD. Deciding what’s defensive versus offensive, decentralizing versus centralizing, is genuinely difficult and often contested.

This classification ambiguity means d/acc can become a Rorschach test—people project their preferred technologies onto it and claim them as defensive/decentralized. We need much more rigorous frameworks for actually assessing which technologies genuinely distribute power versus merely claiming to.

There’s also a deep tension around feasibility of coordinated action. d/acc requires coordinated intention—deliberate choices by funders, researchers, policymakers, and cultural leaders to prioritize certain technologies over others. But the very existence of coordination problems is part of what makes the world vulnerable to begin with.

Vitalik acknowledges this: “Ethereum is actually attempting to execute on the old libertarian dream of a market-based society that uses social pressure, rather than government, as the antitrust regulator.” Notice the verb—attempting. It’s very hard. Maintaining client diversity, preventing staking centralization, encouraging geographic distribution—all require constant effort swimming against natural currents toward concentration and monopoly.

If it’s this difficult within a community explicitly committed to decentralization, how realistic is it for the broader world? Market incentives push toward whatever’s most profitable, which often means offensive technologies (weapons sell) and centralizing systems (economies of scale). Cultural pressure can push back somewhat, but it’s a weak force compared to billions in profit.

d/acc risks being a vision that works only among true believers in small communities, unable to scale to civilizational level where it would need to work to address existential risks.

Reader: And there’s the question of whether defensive technologies actually exist at sufficient potency, right?

Hilma: Yes, the asymmetry problem. In many domains, offense genuinely has inherent advantages over defense. It’s typically easier to destroy than to create, to attack than to defend, to break than to fix. One person with bioengineering skills might create a pandemic that kills millions; defending against all possible pathogens requires anticipating what they might build and having responses ready.

Cryptography is one domain where defense actually has systematic advantages—math lets you create locks exponentially harder to pick than the effort to create them. But this is relatively rare. In most physical domains, and even in some information domains, the attacker has advantages.

If defense is genuinely harder than offense, d/acc might be pursuing an impossible dream. You can’t just will defensive technologies into existence through funding and cultural emphasis if the fundamental physics or mathematics don’t support them.

Reader: So what’s d/acc’s response to that concern?

Hilma: The response is essentially: we won’t know until we try much harder than we currently are. Maybe defense is harder, but we’re dramatically underinvesting in finding out. For every dollar spent on bioweapons research or AI capabilities, we spend roughly how much on comprehensive defensive systems? Probably far less. We might discover that with serious effort, defense is more feasible than we thought. Moreover, even if defense is harder, making it less hard still matters. If we can shift the offense/defense balance even somewhat toward defense, it could change the strategic landscape significantly.

Reader: These are significant problems. Are you saying d/acc doesn’t work, or just that it’s partial and imperfect?

Hilma: Partial and imperfect—but still valuable. Whatever its limitations, d/acc is directing attention usefully—toward the structure and distribution of technological power, toward the distinction between offensive and defensive capabilities, toward the possibility of coordination without domination. These are the right questions even if we don’t have perfect answers.

Reader: Okay, I’m convinced that d/acc is interesting and valuable despite its limitations. But it also seems like a small movement—mostly crypto people and a few researchers. How do you actually get d/acc to scale? How do you shift technological development at civilizational scale?

Hilma: This is where d/acc thinking needs the most development, and where thoughtful people should be focusing their energy. Let me sketch several complementary approaches.

First, we need better funding mechanisms for public goods and defensive technologies. This is an area where Vitalik has done substantial work.

The core problem is that many defensive technologies are undersupplied by markets because their benefits are broadly distributed while costs are borne by specific builders. Quadratic funding, retroactive public goods funding, and similar mechanisms attempt to solve this. They create ways for communities to collectively fund work they value without requiring centralized grant-making authorities who can be captured or become bottlenecks. But these mechanisms have faced their own challenges—the 2021-2025 era of on-chain public goods funding relied heavily on subsidized token flows and treasury distributions that proved unsustainable when market conditions shifted. More fundamentally, most funding rounds optimized for popularity and social capital rather than tracking actual impact and outcomes. Projects got funded based on vibes and connections; whether they delivered meaningful results was rarely measured or rewarded.

This matters because the ultimate vision—getting governments and large institutions to provide sustained capital for public goods funding—depends entirely on demonstrating that these mechanisms actually work. If quadratic funding can’t show that it produces better outcomes than traditional grant-making, why would a city council or national government adopt it? The path forward requires rigorous impact measurement that can prove to skeptical institutional funders that decentralized allocation mechanisms outperform the alternatives. Without solving this problem, public goods funding remains a beautiful experiment rather than a scalable infrastructure for civilizational coordination.

But funding alone isn’t sufficient. We also need cultural and narrative work. Right now, the dominant stories in technology celebrate aggressive disruption—“move fast and break things,” “winner takes all,” “blitzscaling.” These narratives shape what ambitious people choose to work on and what investors choose to fund.

We need equally compelling narratives around building resilient systems, enabling human agency, creating genuine security. The challenge is these are inherently less dramatic. A system that quietly works, preventing catastrophes that never happen, doesn’t generate the same mythic appeal as a founder who “changed everything.”

Part of this is prestige engineering. Academia figured out how to make university positions desirable despite relatively low pay by offering status, autonomy, and meaning. d/acc needs similar dynamics. Create prizes for defensive tech innovations. Build institutions that confer credibility. Develop career pathways where working on pandemic prevention or cyber defense or privacy tools is recognized as high-status, important work.

We don’t need to convert everyone, just enough. If even 10% of talented technologists orient toward defensive development, that’s enough to make significant progress. The goal isn’t unanimous agreement but sufficient critical mass.

Reader: Let’s say we solve all these problems—funding, culture, institutions, talent. What would success actually look like? How would the world be different?

Hilma: Let me paint you a picture, inspired by the Foresight Institute’s d/acc pathway.

It’s 2045. You’re in Bangkok, visiting friends. Your phone buzzes—not with a notification, but with a subtle haptic pattern you recognize. Your personal air quality monitor has detected an anomaly: trace amounts of a novel viral sequence in the building’s air. It’s not cause for alarm yet—the concentration is very low—but the pattern matches something that distributed biosecurity networks flagged yesterday in Seoul.

You glance at the prediction market integrated into your city’s public health dashboard. It shows 35% probability of this becoming a local outbreak, 8% probability of wider spread. Low, but not negligible. The anomaly was detected by community-operated air quality sensors scattered throughout the city—thousands of them, none owned by government or corporations, all contributing data through privacy-preserving protocols.

The pathogen sequence was identified this morning through wastewater analysis that runs continuously in cities worldwide. Within hours, specs for a targeted prophylactic were posted to open-source bio-defense repositories—not by the WHO or CDC, but by a distributed network of researchers whose work is funded through public goods mechanisms. Any adequately equipped facility can manufacture it.

You’re not worried. The local medical collective your neighborhood participates in already has the synthesis equipment and trained staff. If the prediction markets shift significantly higher—say above 60%—they’ll begin producing doses. Distribution will happen through the neighborhood network, probably via nasal spray that you can self-administer.

This scenario plays out a few times per year now—novel pathogens detected early, addressed locally, never given chance to spread. The last actual pandemic was in 2031, and it was mild because the defensive infrastructure was mostly in place by then.

Reader: That’s a compelling vision for bio defense. What about other domains?

Hilma: Your digital life looks radically different too. Most of your data lives on devices you control—your phone, your laptop, your personal server if you’re technical. When you interact with services online, you choose what information to share and for how long. Default is privacy; sharing is deliberate.

You use social media, but it’s federated—your account lives on a server your neighborhood coop runs, but you can interact with people on any server. No platform can unilaterally change the rules or algorithmically manipulate what you see. If your current server’s moderation policies stop working for you, you move to another without losing your connections or content.

Misinformation still exists, obviously—people still believe and share false things. But you have access to multiple epistemic tools that help you navigate. Community Notes that show consensus across political divides. Prediction markets that aggregate information about factual questions. Trust networks where you can see what people you respect think about sources and claims. None of these are perfect; all are useful. And crucially, none are controlled by a single entity that could manipulate them.

Your city’s governance involves you more directly than your grandparents could have imagined. Not through requiring constant attention—you’re not voting on every little thing. But for major decisions, sophisticated collective intelligence tools help surface options, identify areas of consensus, and clarify actual disagreements. When you do participate, your voice is heard in ways that rough majoritarian democracy doesn’t achieve—quadratic voting for funding allocation, conviction voting for priorities, bridging-based algorithms that find widely acceptable solutions.

Reader: This sounds almost utopian. What about the conflicts and failures?

Hilma: Oh, there are plenty. The local energy network has had two major technical failures in the past year, requiring coordination with the regional grid that didn’t go smoothly—disputes over price, over priority access, over who pays for the interconnection infrastructure. The federated social media is filled with drama about content moderation standards and server policies.

When a sophisticated cyberattack hit the regional banking network last year, the decentralized backup systems worked, but slowly. Some people couldn’t access their funds for three days. There was serious debate about whether the resilience was worth the efficiency cost, with some people calling for re-centralization.

And globally, the picture is very uneven. Your city’s defensive infrastructure is excellent. But plenty of places lack resources or coordination to build equivalent systems.

The key difference from 2025 isn’t that problems are solved but that systems are more robust to failure and more distributed in power. When things break, they break locally rather than cascading globally. When institutions become corrupt or ineffective, people have alternatives rather than being trapped. When new threats emerge, defensive responses can be mounted quickly without requiring centralized authority.

There’s a widespread understanding that technological development has direction, that direction matters, and that we collectively have some agency over it. Not complete control—the world remains complex and surprises still happen. But intentional coordination shapes outcomes more than it did in 2025, when technological development was largely left to market forces and military competition.

Reader: I’m struck by something in your 2045 scenario. You described sophisticated collective intelligence tools, quadratic voting, bridging algorithms—but those felt almost like stage props compared to the biosecurity and cyber defense details. The “decentralized” part of d/acc seems well-developed, but the “democratic” part feels thinner. Is that a gap in the framework?

Hilma: You’ve identified d/acc’s most significant unfinished business. Vitalik added the “decentralized/democratic” dimension to prevent defensive technology from becoming authoritarian, but when you examine the literature closely, “decentralized” does most of the heavy lifting—cryptography, distributed systems, peer-to-peer networks. The “democratic” element remains underspecified: how do diverse populations actually coordinate at scale? How do they make collective decisions about which technologies to accelerate? How do they govern shared resources without either centralized authority or fragmentation?

This is where we need Audrey Tang and Glen Weyl’s “Plurality” framework. Where d/acc focuses on the question “how do we build defensive technologies that distribute power?”, Plurality asks the complementary question: “how do we harness digital technology to enable genuinely democratic coordination across difference?” Tang isn’t theorizing from the sidelines—as Taiwan’s former Digital Minister, she implemented systems like Pol.is for large-scale deliberation that actually work. Plurality provides the democratic depth that d/acc’s vision requires but doesn’t supply. That’s where we turn next.